The Growing Imperative for Ethical AI in Business

AI ethics in enterprise isn’t just nice-to-have anymore—it’s table stakes. Companies using artificial intelligence are getting scrutinized by everyone from government regulators to customers demanding fair, transparent systems.

Get this wrong, and the fallout is brutal. Imagine an AI rejecting loan applications unfairly or screening out qualified job candidates. Suddenly you’re facing lawsuits, PR nightmares, and customers leaving. But here’s the flipside: companies nailing ethical AI attract better talent, keep customers loyal, and sleep easier when regulations change.

Smart businesses figured out something counterintuitive—ethical constraints don’t stifle innovation, they focus it. Baking ethics into AI development from day one leads to more reliable systems and fewer nasty surprises down the road. Because let’s face it: unchecked tech usually ends badly.

Why Boards and C-Suite Executives Are Paying Attention

Boardrooms are finally waking up to AI ethics—and not a moment too soon. Directors who used to glaze over at tech talk now demand answers about bias, privacy, and fairness. Why? Because they’ve seen companies get hammered with multimillion-dollar fines and stock dips after AI failures.

For the C-suite, this isn’t philosophy—it’s risk management 101. CFOs see ethical AI as insurance against regulatory fines. CIOs know governance prevents technical disasters. And CMOs? They’ve learned customers will criticize brands over unfair AI faster than you can say “algorithmic bias.”

Identifying Common Ethical Pitfalls in AI Implementation

Most AI ethics failures boil down to a few avoidable mistakes. The big one? Bias creeping into systems that decide who gets hired, loans, or medical care. It’s like building a house on uneven ground—everything looks fine until the walls start cracking.

Bias sneaks in through backdoors: training data reflecting old prejudices, features missing key context, or testing that glosses over fairness checks. That’s why smart teams bake bias detection into every development stage—not just as an afterthought.

Data Quality and Representation Issues

Bad data makes bad AI—it’s that simple. Train a hiring algorithm mostly on male engineers’ resumes, and guess what? It’ll keep favoring male engineers. These aren’t just “oops” moments; they’re lawsuits waiting to happen.

And representation gaps? They’re like blind spots in a car’s mirrors. If your AI only understands urban millennials, rural seniors might as well be invisible. This isn’t just unfair—it’s terrible for business when whole customer segments get ignored.

Transparency and Explainability Gaps

Black-box AI terrifies everyone—for good reason. When no one can explain why an algorithm denied your mortgage or flagged your health claim, trust evaporates overnight. Banks and hospitals especially feel this heat, with regulators demanding clear explanations for AI decisions.

The fix? Ditch the “trust us, it’s magic” approach. Build systems that can at least show their work, even if the math gets complex. Customers and regulators will thank you.

Inadequate Accountability Structures

Here’s a scary thought: many companies deploy AI with no clear owner for ethical outcomes. It’s like launching a product with no QA team—eventually, things blow up. That’s why leading firms now have AI ethics officers and governance committees watching this stuff like hawks.

Strategies for Building Responsible AI Frameworks

Winning at responsible AI deployment means treating ethics like seatbelts—built-in, not bolted on as an afterthought. It takes work, but the alternative is much worse.

Establish Clear AI Ethical Guidelines

Start by writing down what “ethical AI” actually means for your company. Not vague platitudes—real rules about what’s okay and what’s not. A hospital’s guidelines will differ from a bank’s, but both need clear guardrails.

Good guidelines answer practical questions: Who approves new AI systems? How do we handle ethical red flags? What happens when things go sideways? Think of it as an instruction manual for not screwing up.

Implement Governance Frameworks

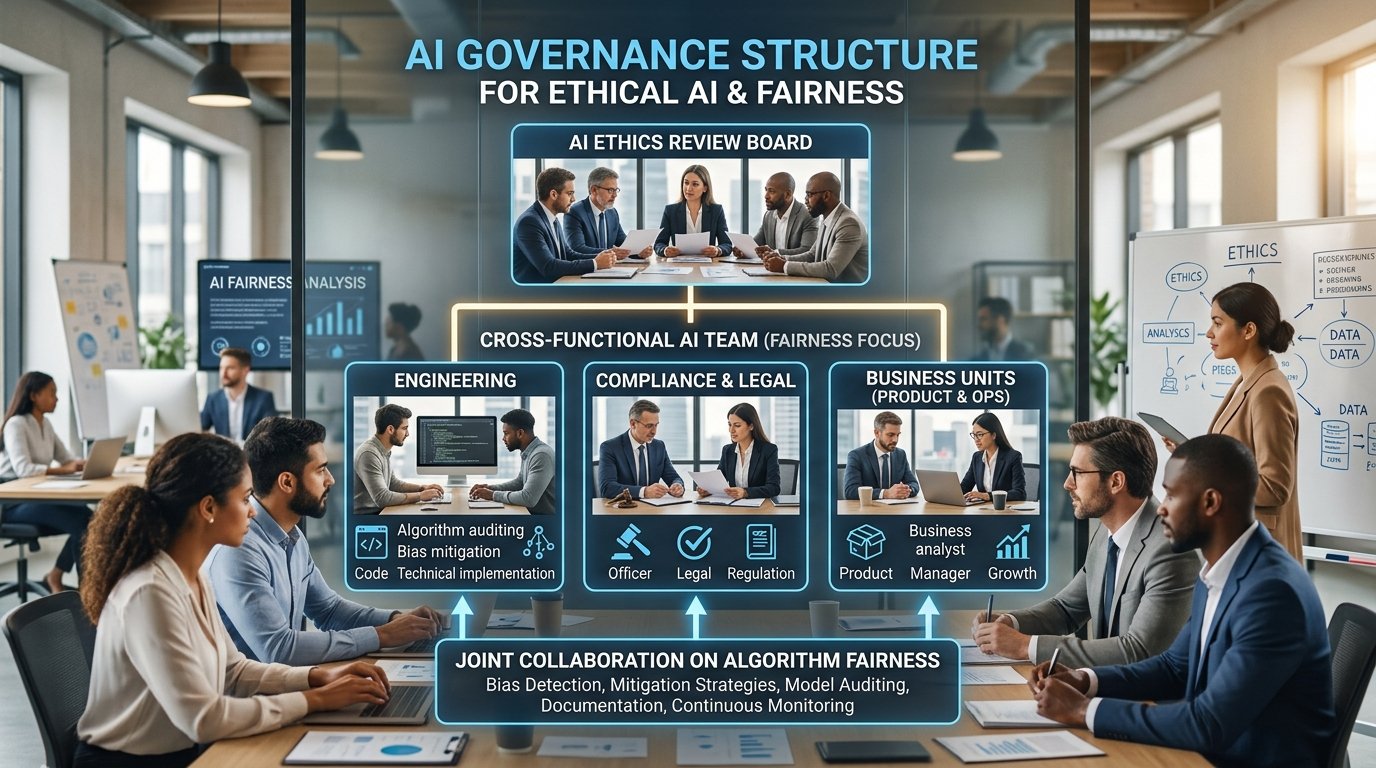

Solid AI governance needs structure, not good intentions. That means:

- An ethics review team examining AI projects before launch

- Documenting what data trained the model and its known flaws

- Regular checkups to catch bias that creeps in over time

- A plan for when (not if) something breaks

- Ongoing monitoring after deployment

This works best when someone powerful owns it—think Chief Ethics Officer or Chief Data Officer. And it needs real teeth; governance that can’t say “no” is just paperwork.

Develop Technical Safeguards

Ethical AI needs technical muscle. Automated bias detectors should scan models like spellcheck catches typos. Documentation—like model cards explaining a system’s limits—helps teams use AI responsibly.

Think of it like a car’s safety features. You wouldn’t drive without airbags; don’t deploy AI without these protections.

Build Cross-Functional Collaboration

Engineers alone can’t solve ethics puzzles. You need a mix of data scientists, lawyers, business folks, and maybe even philosophers. Each spots different risks: compliance sees regulatory traps, domain experts catch context-specific fairness issues, and product managers understand customer impact.

It’s like making a movie—great results need writers, directors, and editors working together. Solo acts usually flop.

Regulatory Landscape and Compliance Considerations

AI regulation is moving faster than a startup’s growth metrics. Staying compliant means building governance that adapts as rules change.

Key Regulatory Frameworks

The EU’s AI Act is the big one—it sets strict rules for high-risk AI, demanding transparency and human oversight. And industry-specific regulations pile on: finance wants explainable lending algorithms, healthcare requires validated diagnostic tools, and employment laws ban biased hiring systems.

Translation? Map your AI against all relevant rules, or risk waking up to enforcement letters.

Documenting AI Systems for Regulatory Compliance

Regulators love paperwork. Keep detailed records on training data, model choices, testing results—everything. It’s like keeping receipts during an audit; the more you have, the smoother it goes.

Impact assessments matter too. Show you’ve thought about how AI decisions affect different groups and what safeguards exist. Update this as systems evolve—static documentation is useless.

Managing Liability and Risk

AI liability is still a legal gray area, but courts are cracking down. Companies cutting corners face fines, lawsuits, and brand damage. Some insurers offer AI coverage, but policies have more holes than Swiss cheese.

Your best defense? Documented governance, rigorous testing, and transparency. When mistakes happen (and they will), showing good-faith effort matters more than perfection.

Future-Proofing Your Business with Ethical AI Practices

Ethical AI isn’t a compliance checkbox—it’s competitive armor. Companies doing this right today will outlast competitors scrambling to catch up tomorrow.

Building an AI Ethics Culture

Real change needs cultural roots. Employees should feel safe calling out ethical concerns without fear. Leaders must walk the talk—allocating budget, promoting ethical wins, and sometimes killing profitable but questionable projects.

Training helps too. Teach data scientists bias detection, product managers ethical implications, and executives governance basics. Knowledge spreads responsibility beyond the ethics team.

Continuous Monitoring and Improvement

Ethical AI isn’t “set it and forget it.” Models degrade over time as data drifts. Regular audits catch these shifts before they cause harm.

Create feedback loops too—employees and customers often spot issues metrics miss. A simple “see something, say something” channel works wonders.

Staying Ahead of Regulatory Evolution

Regulations will keep changing. Stay plugged into industry groups and legal updates to anticipate shifts. Better yet, exceed minimum requirements—customers notice when companies go beyond compliance.

Measuring and Communicating Ethical Impact

What gets measured gets managed. Track fairness scores, governance review times, and stakeholder feedback. Then share results—transparency builds trust that “ethical AI” isn’t just marketing fluff.

FAQ: Common Questions About AI Ethics in Enterprise

What’s the difference between AI ethics and AI governance?

Ethics is the “what”—our principles about right and wrong. Governance is the “how”—structures making those principles real. One’s your moral compass, the other’s your steering wheel.

How do organizations detect bias in AI systems?

Mix math and human judgment. Statistical tests spot performance gaps between groups. Fairness metrics quantify different bias types. Then domain experts sanity-check whether decisions make sense. It’s part science, part art.

What are the main regulatory risks for AI systems?

Discrimination lawsuits, massive fines under new AI laws, privacy violations, and brand damage when failures go viral. Good governance reduces these risks dramatically.

How can small organizations implement AI ethics?

Start small but start. Even a lightweight ethics review group helps—gather your tech lead, legal person, and a customer-facing manager to vet AI projects. Document decisions. It’s about intentionality, not bureaucracy.

What should organizations do if they discover bias in a deployed AI system?

Act fast but thoughtfully. Assess the damage. Tell affected parties honestly. Fix the model or pull it. Learn from mistakes. How you handle failure often matters more than the failure itself.

Conclusion

AI ethics in enterprise has graduated from optional to essential. Companies treating this as window dressing will struggle, while those baking ethics into their DNA will lead.

The recipe? Clear principles, strong governance, technical safeguards, and diverse perspectives. As regulations tighten and customers get savvier, ethical AI becomes business-critical.

Waiting is risky. Start building your framework now—your future competitors already are. For deeper dives, explore current research on AI ethics from top think tanks.

No Comments